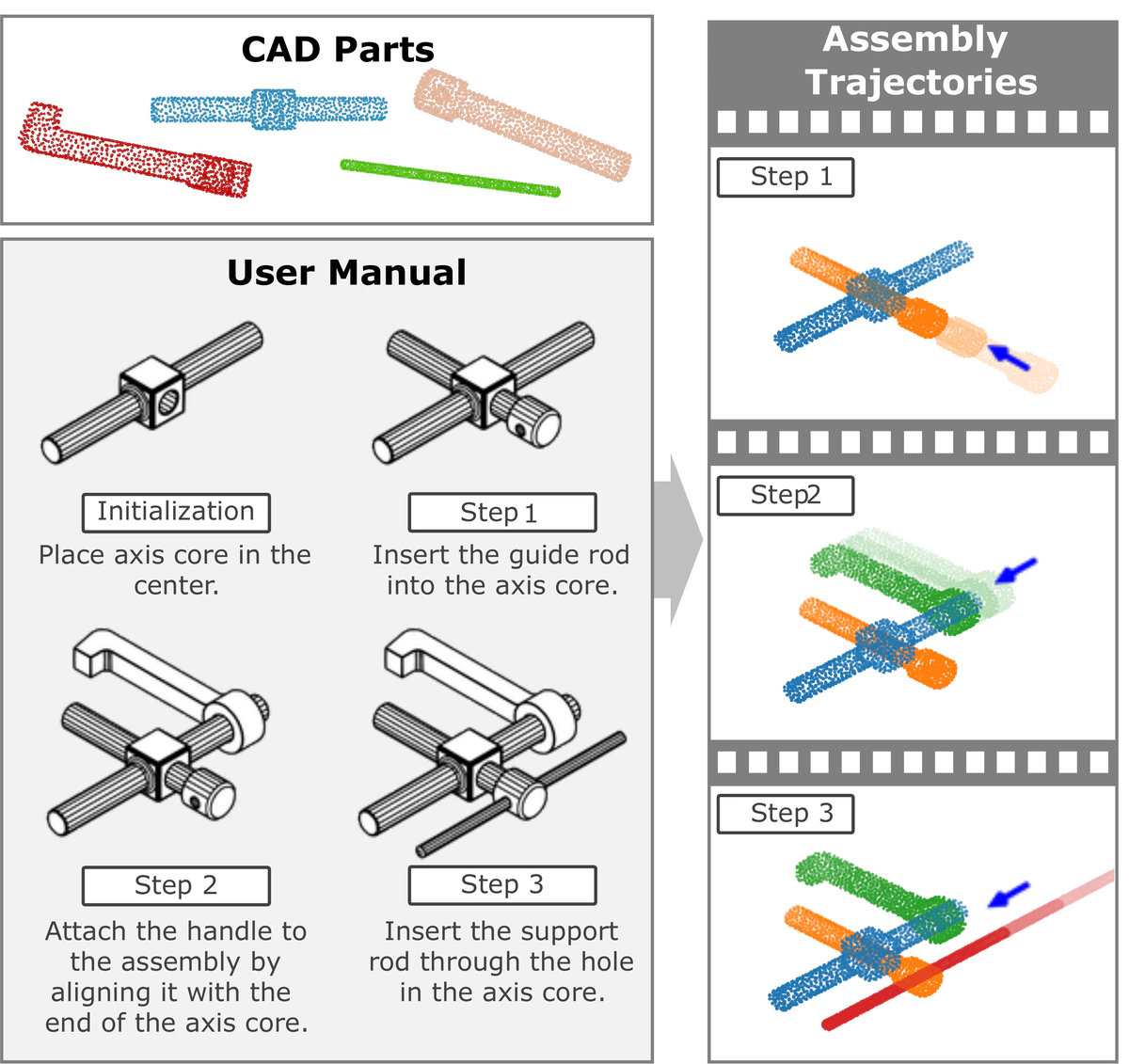

Given a step-wise manual with diagrams and text (lower left), we aim to assemble the corresponding set of 3D parts (upper left) in the virtual environment, outputting its step-wise assembly trajectories, which can be rendered into 4D animations (right).

Given a step-wise manual with diagrams and text (lower left), we aim to assemble the corresponding set of 3D parts (upper left) in the virtual environment, outputting its step-wise assembly trajectories, which can be rendered into 4D animations (right).Abstract

Assembling objects from parts requires understanding multimodal instructions, linking them to 3D components, and predicting physically plausible 6-DoF motions for each assembly step. Existing datasets focus on simplified scenarios, overlooking shape complexities and assembly trajectories in industrial assemblies. We introduce AssemblyBench, a synthetic dataset of 2,789 industrial objects with multimodal instruction manuals, corresponding 3D part models, and part assembly trajectories. We also propose a transformer-based model, AssemblyDyno, which uses the instructional manual and the 3D shape of each part to jointly predict assembly order and part assembly trajectories. AssemblyDyno outperforms prior works in both assembly pose estimation and trajectory feasibility, where the latter is evaluated by our physics-based simulations.